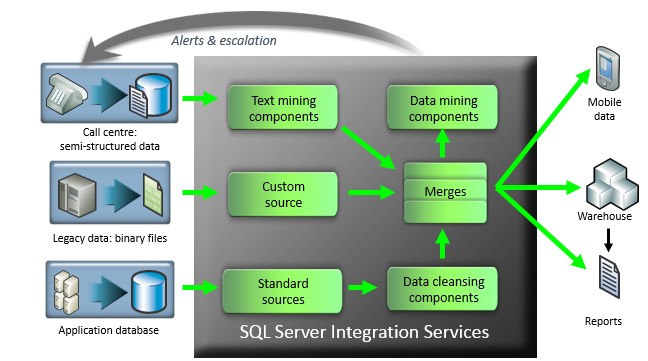

While designing ETL processes, it is not always advised that there should be a key that should identify the lineage. Finally, a “ BatchID” can be appended to each of the records that will tell us about the execution details such as date and time of execution, duration of the run, etc. Along with that, we can also log another field, “ TransformationID”, which can tell us about the specific transformations that each of the records has undergone in order to be loaded in the data warehouse. In order to know from which source a particular record originated, we need to implement a “ SourceSystemID” as the lineage key, which will ultimately enable us to track back to the source database when anything in the warehouse goes wrong. In such a system, the data from the sources might be different from each other however, in the final data warehouse, the format for storing all the data will be identical. This flow is implemented, as mentioned in the article above. If you see in the diagram above, I have marked the data flow from all the sources in separate colors. Finally, all the data from the databases and the file will be pushed into the warehouse for analysis.

For example, the data that comes from the DB1 only needs to go through “ Transformation 1” data from DB2 needs to go through “ Transformation 2” and “ Transformation 3” and data from the file needs to undergo each of the transformations. Now, let us assume that all the data does not need to go through each of the transformations. Finally, the data is being stored in the Warehouse database from where further reporting can be carried out. Once the data is loaded into the ETL pipeline, there are a series of transformations that can be applied to the data as it moves. As you can see, there are three sources from where data is generated – two databases and one file. Let us consider the above simple ETL process. You can add much complex, detailed information in order to establish a correct lineage for tracking the records back. Other details can also be included, like batch information that tells us how many times the ETL process was being executed, etc. Once the records are within the ETL pipeline, we can assign a key to those records, which will help us identify the source system and tell us from which data source the records are being imported. We just have to identify a set of records for the first time, as it enters the ETL pipeline. In my opinion, the process of implementing Data Lineage in an ETL process is very simple. How to implement Data Lineage in practical Introducing a lineage key in the ETL process makes the documentation of the project a bit easier as we already know how the data has been transformed in order to come to a particular set Makes the data movement process more transparent. In case we are not able to track down such missed records, it will lead us to incorrect figures being reported in the warehouse When we move data across multiple systems for data processing, it might happen that a specific set of records were being missed out due to unknown reasons. Simplify the process of moving data across multiple systems. This means there should be something unique in the records of the data warehouse, which will tell us about the source of the data and how it was transformed during the processing It is used to identify the source of a single record in the data warehouse. It is a piece of simple metadata information that helps us detect gaps in the data processing pipeline and enables us to fix issues later.Īs it goes by the name, Data Lineage is a term that can be used for the following: In order to be able to detect what the source of a particular record is, we need to implement something known as Data Lineage. There can also be aggregations or other calculations made on this raw data before finally moving into a data warehouse or preparing it for reporting.

For example, when the ETL receives the raw data from the source, there may be operations applied to it like filtering, sorting, merging, or splitting two columns, etc. While transforming the data in the ETL pipeline, it has to go through multiple steps of transformations in order to achieve the final result.

In order to process this data, we use a variety of ETL tools, which in turn makes the data transformation possible in a managed way. There are systems that generate data every second and are being processed to a final reporting or monitoring tool for analysis. In this modern world, where companies are dealing with a humongous amount of data every day, there also lies a challenge to efficiently manage and monitor this data. In this article, I am going to explain what Data Lineage in ETL is and how to implement the same.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed